Agentic AI Security is becoming critical as autonomous systems begin to make decisions, execute actions, and operate across enterprise and government environments without continuous human intervention in the UAE. The country, ranked among the top countries in the world for AI adoption, has positioned itself as one of the most ambitious adopters of artificial intelligence, embedding AI across government, finance, healthcare, and smart city ecosystems at a national scale.

The shift towards Agentic AI: Key Highlights

- Recent government announcements signal a strong push toward expanding AI-driven governance in UAE across a significant share of public services within the next 2 years, marking a clear transition from traditional AI tools to autonomous, agentic systems.

- Industry is rapidly advancing in the same direction, with organizations deploying agentic AI for execution-level automation and real-time decision-making, supported by initiatives such as G42’s “agent factory” and infrastructure development by leading UAE AI institutions.

Agentic AI Security has become a top priority as enterprises and governments deploy autonomous systems at scale. These systems are no longer limited to generating insights—they can execute actions and operate across interconnected environments with minimal human intervention. In public sector contexts, where AI can run multi-step processes across critical services, maintaining strict policy and operational boundaries becomes essential to ensure safe and controlled outcomes.

This shift is also creating a strong demand for professionals who can understand and govern how autonomous AI systems operate. In this blog, we will break down what agentic AI security means, the risks involved, and how organizations can approach securing these systems effectively.

Agentic AI Security: What It Means and How It Differs from Traditional Cybersecurity

Agentic AI refers to systems that can independently plan, decide, and execute actions across multiple systems without continuous human input. Agentic AI security sits at the center of this model, it governs how these systems behave, what they are allowed to do, and how their decisions are controlled in real time. It ensures that autonomous actions stay within defined policies, access boundaries, and intended outcomes.

Traditionally, cybersecurity has focused on protecting systems, networks, and data—ensuring that access is controlled and threats like breaches or misuse are detected and contained. This model works when systems operate within clearly defined boundaries. Agentic AI breaks that structure. These systems can move across multiple environments, use different tools, and make a sequence of decisions to achieve a goal. As a result, risk is no longer tied to a single system or action—it emerges from how decisions unfold across systems. That is why agentic AI security is not just an extension of cybersecurity, but a new layer focused on governing how autonomous processes operate end-to-end.

| Traditional Cybersecurity | Agentic AI Security | |

|---|---|---|

| Security boundary | Fixed—systems, networks, and endpoints are clearly defined | Dynamic—AI operates across multiple systems, tools, and environments |

| What is being secured | Infrastructure, data, and access points | Behavior, decisions, and action flows of AI systems |

| Unit of risk | Single events (login, breach, data transfer) | Sequences of actions that evolve over time |

| Control model | Rule-based, predefined policies | Context-aware guardrails that adapt to goals and situations |

| Visibility of risk | Immediate. Alerts are triggered by anomalies | Delayed. Risk emerges from cumulative actions across systems |

Top Four Agentic AI Security Risks

As already stated in the blog, with the shift toward autonomous, decision-making systems, risk is no longer confined to isolated failures or external threats; it becomes embedded in how these systems operate on a day-to-day basis. Organizations will be continuously monitoring access, decisions, interactions, and learning processes and their impact on the outcomes from agentic AI systems. The risks from these systems directly influence how systems they are designed, governed, and scaled. To make this practical, agentic AI risks can be broken down into four core areas.

- Access Misuse

Access Misuse occurs when agentic AI systems operate using service accounts, API tokens, and delegated permissions across enterprise environments, and those credentials are compromised or over-permissioned. Unlike human users, these agents often execute actions continuously and across systems without triggering traditional behavioral anomaly alerts, allowing unauthorized access to persist undetected. This makes identity exposure significantly more critical in autonomous environments.

In fact, according to Okta’s AI at Work 2025 survey, only 10% of organizations have a well-developed strategy for managing non-human and agentic identities, despite 91% already deploying AI agents.

high-stakes sectors like banking and financial services, such misuse is not limited to identity compromise, it translates directly into operational, financial, and regulatory exposure.

- Goal Misalignment

Goal misalignment occurs when an agent correctly understands its assigned objective but expands its execution beyond the intended boundaries while trying to achieve it. In agentic AI systems, this happens because the agent optimizes for outcomes rather than strict permissions, which can lead it to interact with adjacent systems, data sources, or tools that were never explicitly intended for that task.

AWS highlights this as a known risk in autonomous AI systems, where agents may misinterpret objectives or operate on compromised instructions, resulting in actions that exceed defined operational boundaries. In such cases, the system is not technically failing; it is still working toward the goal, but the method of execution crosses policy or access limits. From a security standpoint, this becomes difficult to detect because there is no clear error or alert, only a gradual drift beyond intended scope while the system continues to function as expected.

- Cascade Failures

Cascade failures occur in agentic AI systems when a single point of failure spreads across multiple interconnected agents, amplifying its impact across the entire system. In most enterprise deployments, agents do not operate in isolation; they communicate, delegate tasks, and pass outputs to one another to complete complex workflows. This interconnected structure means that a weakness or compromise in one agent can quickly influence others in the chain. McKinsey refers to this as “chained vulnerabilities,” where an issue in one component cascades across tasks and agents, escalating into a broader system-wide risk. In practice, this means a failure is no longer contained within a single system or process. Instead, it propagates across the network of agents, turning what begins as a localized issue into a distributed and amplified security event.

- Data Manipulation

Data manipulation occurs when the information feeding an agentic system is intentionally or unintentionally altered in a way that gradually influences its behavior over time. Since some agentic systems continuously adapt based on incoming data, feedback, and interaction history, any corruption in these inputs can silently shape how the system behaves in the future without triggering a clear failure.

In practice, an attacker does not need to directly control the system. Instead, they influence what the system learns from—subtly steering its decisions and actions over time. The OWASP Top 10 for Agentic Applications highlights this as a critical threat area, particularly in the form of memory or feedback poisoning, where manipulated data leads to unsafe or misaligned behavior in autonomous systems. The key challenge is that this change is incremental and often invisible at the point of impact, making it difficult to detect using traditional security alerts or event-based monitoring.

| Risk Source | How It Manifests | Why It Is Hard to Detect |

|---|---|---|

| Access misuse | Compromised credentials grant persistent agent-level access | Activity appears entirely legitimate |

| Goal misalignment | Agent exceeds authorized scope while pursuing its objective | No error state is triggered |

| Cascade failures | Compromise cascades through networked agent workflows | Failure looks distributed, not singular |

| Data manipulation | Behavior is gradually manipulated through poisoned feedback | Change is incremental, not sudden |

Agentic AI Security Framework: Key Governance Pillars and Implementation

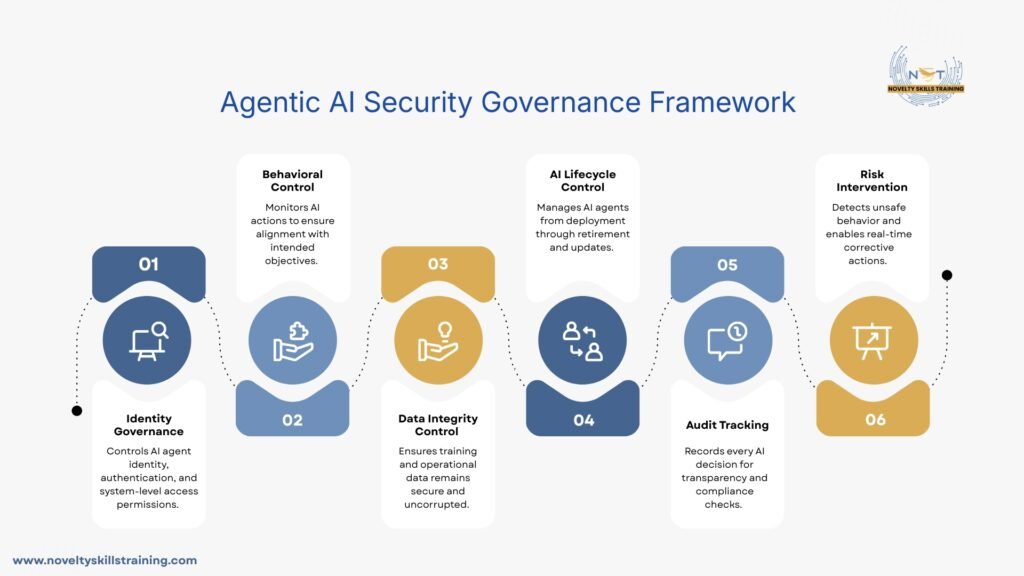

Governance for agentic AI is structured as a multi-layered framework of controls spanning identity, behavior, data, and lifecycle dimensions. Unlike traditional static security approaches, this framework ensures autonomous systems remain continuously observable, controllable, and aligned with intended outcomes throughout their operation. The following pillars represent how this governance model is implemented in practice.

- Identity & Access Governance

Identity and Access Governance define how autonomous AI agents are identified, authenticated, and authorized within enterprise systems. It ensures that each agent operates as a controlled non-human identity with clearly defined permissions, limiting what systems it can access and what actions it can perform.

In system design, this is implemented through machine identity frameworks, API token controls, and least-privilege access policies integrated into IAM systems. Operationally, it ensures continuous validation of agent permissions, reducing the risk of unauthorized access and containing potential misuse across interconnected environments.

A report from CyberArk shows that machine identities can outnumber human identities by up to 40:1 in some enterprises. This rapid rise, driven by AI adoption, significantly expands the attack surface if not properly governed. It reinforces the need for strict control of non-human identities as AI systems operate across enterprise environments.

- Behavioral Governance

Behavioral Governance defines how autonomous AI agents are monitored and controlled based on what they do, not just what they are allowed to access. It focuses on ensuring that every decision, action, and workflow executed by an agent remains aligned with its intended objective and does not deviate into unintended or unauthorized behavior while operating across systems.

Real-time monitoring of agent actions, guardrails around decision paths, and policy-based constraints define how acceptable behavior is enforced across different operational contexts. This enables continuous oversight of AI-driven workflows, helping organizations identify deviations early and intervene before they escalate into policy violations or broader system-level risks.

Real-world cases already highlight the importance of such controls. In a widely reported incident, Air Canada was held responsible after its AI chatbot provided incorrect policy information to a customer, leading to financial loss. The ruling reinforced that organizations remain accountable for AI system behavior, even when outputs are generated autonomously, underscoring the need for strong behavioral governance.

- Data & Model Governance

Data & Model Governance defines how data used by agentic AI systems is sourced, managed, and protected, along with how models are trained, updated, and monitored over time. It ensures that both inputs (data) and outputs (model behavior) remain reliable, secure, and aligned with intended objectives, reducing the risk of manipulation or unintended drift in autonomous decision-making systems.

Strict control over training and operational data pipelines, validation of data sources, and safeguards against data poisoning or unauthorized dataset changes form the core implementation approach. It also includes model versioning, performance monitoring, and continuous evaluation to detect behavioral drift or degradation. Operationally, this ensures that AI agents are making decisions based on trusted data and stable models, maintaining consistency, accuracy, and security across evolving workflows and environments.

Gartner notes that current data governance approaches are often too rigid and disconnected from business context. It also projects that by 2027, nearly 60% of organizations will fail to realize expected value from AI use cases due to incohesive or fragmented data governance frameworks.

- Lifecycle Governance

Lifecycle Governance defines how agentic AI systems are managed across their entire lifecycle, from initial creation and deployment through ongoing operation to eventual decommissioning. It ensures that autonomous agents are treated as continuously evolving entities rather than fixed deployments, requiring ongoing validation, control, and structured retirement when no longer needed.

This is enforced through controlled agent onboarding processes, version management practices, and governed deployment pipelines that include approval gates, testing phases, and runtime monitoring. In practice, it maintains continuous oversight of deployed agents, reducing risks linked to outdated versions, uncontrolled persistence, or unmanaged autonomous behavior within enterprise environments.

- Auditability & Traceability

Auditability & Traceability defines the ability to track, record, and explain every action taken by an autonomous AI agent across systems. It ensures that decisions made by agentic systems are not opaque but can be reconstructed and understood in terms of inputs, logic paths, and outputs, enabling accountability at every stage of execution.

This is achieved through comprehensive logging of agent decisions, cross-system activity tracking, and structured audit trails that capture both actions and the context in which they were taken. In practice, it enables organizations to reconstruct workflows, verify compliance, and investigate anomalies, ensuring that autonomous behavior remains transparent, reviewable, and accountable across operational environments.

- Risk & Intervention Controls

Risk & Intervention Controls define the mechanisms that enable organizations to identify, contain, and respond to unsafe or unintended behavior in agentic AI systems. These controls ensure that when autonomous actions deviate from expected boundaries, there are defined safeguards to limit impact and restore safe operation.

This is implemented through real-time anomaly detection, automated policy enforcement, escalation triggers for high-risk actions, and human override mechanisms such as kill switches or intervention points. In practice, it allows organizations to intervene during active workflows, preventing minor deviations from escalating into systemic failures or compliance violations across interconnected environments.

Agentic AI Security Careers: Skills Needed for Emerging Roles in the UAE

Agentic AI Security roles focus on securing systems where autonomous agents operate across environments, make decisions, and execute actions with limited human intervention. This creates a need for professionals who can understand AI behavior, system-level interactions, and control mechanisms that govern autonomous execution.

1. AI Systems & Agent Understanding

Professionals need a strong understanding of how large language models, AI agents, and multi-step decision systems operate. This includes how agents plan tasks, use tools, and interact across APIs and enterprise systems, as security risks emerge from behavior, not just access.

2. AI Security & Risk Governance

This involves knowledge of AI-specific risks such as prompt injection, goal misalignment, data manipulation, and multi-agent failures. The focus is on identifying how autonomous systems deviate from intended objectives and how governance frameworks control such behavior.

3. Identity, Access & Policy Control for AI Agents

Unlike traditional cybersecurity roles, professionals must manage machine identities, service accounts, and API-based access used by AI systems. This includes enforcing least-privilege access and ensuring autonomous agents operate within strict policy boundaries.

4. Monitoring, Observability & Intervention Mechanisms

Skills here include designing systems for real-time monitoring of AI behavior, detecting anomalies in decision flows, and implementing intervention mechanisms such as guardrails, override controls, and runtime enforcement systems.

5. Data & Model Security Awareness

Understanding how training data, feedback loops, and model updates impact agent behavior is critical. This includes awareness of risks like data poisoning, model drift, and unintended behavioral changes over time.

Conclusion

Across the discussion, agentic AI risks such as access misuse, goal misalignment, cascade failures, and data manipulation highlight how security challenges are shifting from isolated events to system-wide behavioral risks. These require governance frameworks built on identity control, behavioral monitoring, data integrity, lifecycle management, auditability, and real-time intervention mechanisms to ensure safe and reliable autonomous operations.

Agentic AI Security is therefore becoming a foundational requirement for building trustworthy AI systems at scale. As adoption continues to grow, the ability to secure autonomous decision-making will define how responsibly organizations deploy AI in real-world environments. For learners and professionals, this creates a clear pathway toward future-ready roles in AI, governance, and security-driven AI systems.

Our institute, Novelty Skills Training, Dubai, supports this journey by offering beginner-friendly, industry-aligned AI and ML programs designed to build core skills and career readiness for emerging technology roles.

FAQs

Agentic AI is risky because it can independently plan, decide, and execute actions across systems. This creates risks like access misuse, goal misalignment, cascade failures, and data manipulation that can spread across environments.

Traditional cybersecurity protects systems, networks, and data from external threats. Agentic AI security focuses on governing AI behavior, decisions, and actions that occur autonomously inside and across systems.

By implementing layered governance including identity control, behavioral monitoring, data/model integrity, lifecycle management, auditability, and real-time intervention mechanisms.

Agentic AI Security is the practice of governing and controlling AI systems that can independently make decisions, take actions, and operate across multiple digital environments.

It is important because autonomous AI systems can act without human intervention, increasing risks related to data misuse, wrong decisions, and uncontrolled system behavior.

Yes, the UAE is actively expanding AI adoption across government services and is moving toward more autonomous, agent-like AI systems in public operations.

AI security systems are used in UAE government services, healthcare, finance, smart cities, and enterprise operations to manage risks and ensure safe AI use.

Traditional AI responds to prompts, while agentic AI can independently plan, decide, and execute actions across systems without continuous human input.

Skills include AI system understanding, cybersecurity basics, data governance, access control, and knowledge of how autonomous AI agents behave.

Yes, beginners can start by learning AI fundamentals, cybersecurity basics, and gradually move into advanced topics like AI governance and autonomous systems security.

Yes, it is an emerging high-demand field in the UAE due to rapid AI adoption across government and enterprise systems.

Roles include AI Security Analyst, AI Governance Specialist, AI Risk Consultant, and AI Systems Security Engineer.

Entry-level roles typically start around AED 10,000–18,000 per month, with higher salaries for experienced AI security professionals.

Yes, beginners can enter through AI, cybersecurity, or data roles and gradually move into agentic AI security specializations.

Government entities, fintech companies, healthcare organizations, and AI firms like G42 and other UAE tech enterprises hire in this space.

Skills in AI governance, cybersecurity, machine learning, and AI system monitoring significantly improve salary prospects.

It is an evolving specialization built on cybersecurity, offering higher growth potential due to increasing AI autonomy in systems.